Published

19 July 2023

Data in Chemistry: An Overview

By Thomas Galeandro-Diamant, Director of Products and Services, and Alexandre Lohest, Data Science intern

Performing chemistry experiments or simulations means generating and collecting data. All that data can be analysed to generate insights and used to feed predictive or generative AI models. The value you can get from these insights and AI models depends tremendously on the data you use. In this article, we will give you an overview of the most important characteristics that your data must have in order to be valuable.

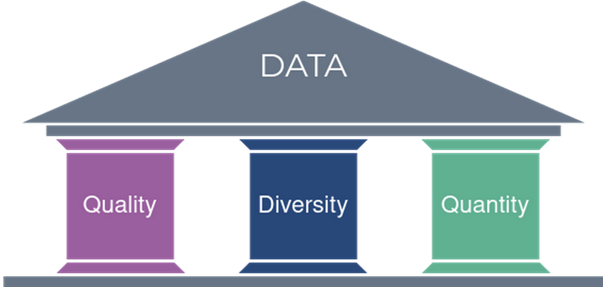

The 3 pillars of data

The most important characteristic of data is its quality, since good data analysis and accurate machine learning model training both rely on high-quality data. Data quality includes several sub-criteria such as correctness, uniqueness, completeness and preciseness, as seen in this post.

Implementing a data quality control process is a good way of assessing the quality of data. If the quality of a dataset is not optimal, a common and easy way to improve it is to detect outliers (data points that strongly differ from the rest of the dataset) and to exclude them from the analysis/models.

To elaborate on the completeness of data, let’s take an example. When performing a chemical reaction in a laboratory, instead of just reporting the conversion and yield in a laboratory notebook, it is valuable to also integrate the chemical analysis data that has been generated (e.g. NMR spectra, LC/MS or GC/MS spectra,1 IR spectra, etc.). Why? Because that additional data depicts the experiment you are performing in a more complete manner.

In the same way, instead of just recording the most evident experiment parameters, it is wise to record all experiment parameters — even those that may only have a weak effect on the experiment outcome. As all data will then be analysed and used to build machine learning models, the models trained from that deeper data are likely to be of higher quality. Completeness is also related to traceability and context; you must know as much as possible about how the data was generated (who, when, what equipment was used, what batch of chemicals, what experimental procedure, and so on).

Having high-quality data is not enough; you also want to have a high data diversity.2 For example, if you want to use some experimental data to train a machine learning model to predict the outcome of that experiment depending on its parameters, you want your experimental data to contain a variety of values for these experimental parameters (i.e. well-distributed over the range of parameter values you want your model to cover) and outcomes.

Contrary to what may seem intuitive, that includes failed experiments, which are as important as successful experiments for machine learning, as shown in this article. If the data diversity is too low, you risk obtaining a machine learning model that is not accurate on the full range of values of the experiment parameters, and you risk overfitting (which is a common problem in machine learning). In a previous blog article, we have shown how using Bayesian optimisation generates new experiments that increase the diversity of a dataset.

The third characteristic of data is its quantity. If your data quantity is too low, the insights you could get from data analysis will be limited and the machine learning models you could train may suffer from low accuracy (e.g. because of overfitting).3 If you think your data quantity is insufficient, using active learning methods such as Bayesian optimisation is an excellent way of guiding the generation of new data that will increase both the size and the diversity of your dataset (as explained in the previous paragraph).

This article is the first of a series of articles about chemistry data. Stay tuned for more in-depth articles!

References

[1] Controlling an organic synthesis robot with machine learning to search for new reactivity, Nature 2018

[2] Negative Data in Data Sets for Machine Learning Training, J. Org. Chem. 2023, 88, 9, 5239–5241

[3] Small sample size effects in statistical pattern recognition, IEEE 1991, pp. 261-262

Further reading